After a $30 Billion Bet, Nvidia Is Building a New AI Chip for OpenAI How It Will Differ from Traditional GPUs

In a major development in the artificial intelligence industry, Nvidia is reportedly planning a new specialized AI chip for OpenAI following a massive $30 billion investment commitment. This move signals a shift in AI hardware strategy—from general-purpose acceleration to highly specialized silicon designed for specific AI workloads.

As AI adoption grows rapidly, the demand is no longer just for powerful training hardware, but for faster, more efficient systems that can handle real-time AI responses at scale.

Why Nvidia Is Developing a New Chip

For years, Nvidia’s GPUs such as the A100 and H100 have powered the training and deployment of large AI models. These GPUs are extremely powerful and versatile, designed to handle massive parallel computations required to train large language models.

However, today’s AI landscape is changing. The biggest challenge is no longer only training models—it is serving them efficiently to millions of users in real time. Applications like ChatGPT, AI assistants, and generative tools require:

- Low latency responses

- High throughput (handling many users simultaneously)

- Lower operational costs

- Better energy efficiency

Traditional GPUs, while powerful, are not fully optimized for these inference-heavy workloads.

GPU vs. the New Inference-Focused Chip

Traditional Nvidia GPUs

- Designed as general-purpose parallel processors

- Excellent for training large AI models

- Flexible and widely used across industries

- Higher power consumption for large-scale inference

- More expensive per AI query when used at massive scale

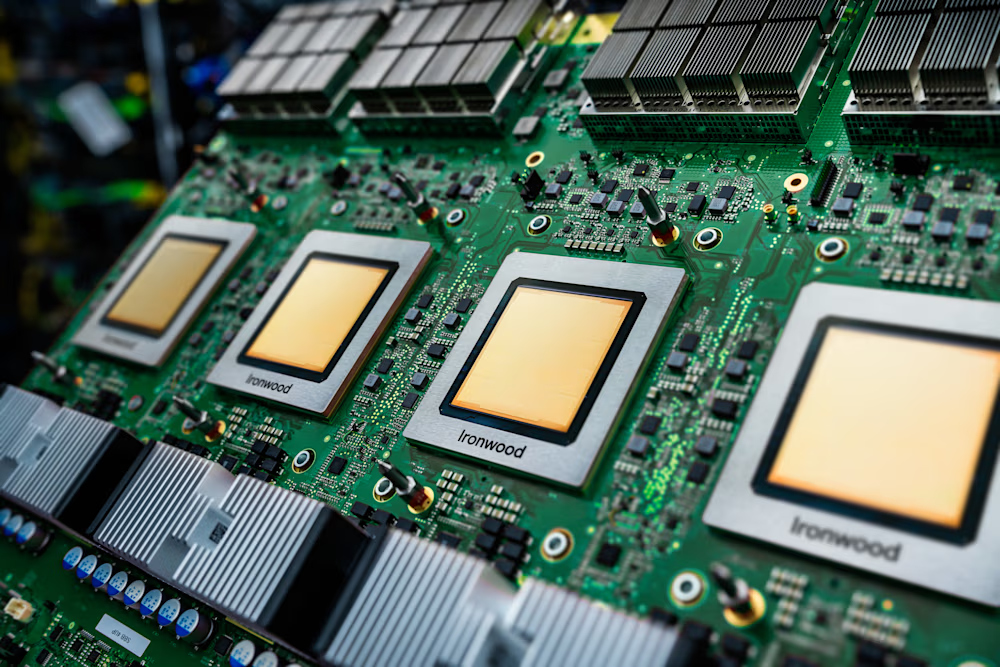

Nvidia’s New AI Chip (Reported)

- Designed specifically for AI inference rather than training

- Optimized for faster response times and lower latency

- Higher efficiency and lower cost per request

- Better energy performance for large-scale deployments

- Tailored architecture for language models and real-time AI services

In simple terms, GPUs are powerful all-purpose engines, while the new chip is expected to be a specialized system built specifically for fast AI responses.

What This Means for OpenAI

OpenAI operates some of the world’s most heavily used AI systems, serving hundreds of millions of users. Running large language models at this scale requires enormous computing resources.

A custom or optimized inference chip could help OpenAI:

- Reduce infrastructure costs

- Improve response speed for users

- Scale services more efficiently

- Support future, larger models without exponential cost increases

At the same time, this partnership strengthens Nvidia’s position as the dominant hardware provider in the AI ecosystem.

The Bigger Industry Trend

This development reflects a broader shift happening across the AI industry:

- Inference is becoming the main workload

As AI moves into everyday products, real-time usage is growing faster than model training. - Specialized AI hardware is the future

Companies are moving toward dedicated chips for training, inference, and edge AI. - Efficiency is the new competitive advantage

The focus is shifting from raw power to performance-per-dollar and performance-per-watt.

Major tech companies are already investing in custom silicon, and Nvidia’s move shows it is evolving beyond traditional GPUs to maintain its leadership.

What to Expect Next

The new chip is expected to be formally introduced at upcoming Nvidia developer events, where more details about its architecture, performance, and deployment plans may be revealed.

If successful, this hardware could:

- Make AI services faster and more responsive

- Lower the cost of running large-scale AI platforms

- Accelerate the global adoption of AI-powered applications

Conclusion

Nvidia’s plan to develop a new AI chip specifically for OpenAI marks an important milestone in the evolution of AI infrastructure. The shift from general-purpose GPUs to specialized inference hardware reflects the growing maturity of the AI industry.

As AI moves from research to everyday use, the companies that can deliver faster, more efficient computing at scale will shape the next phase of the AI revolution.