Why Does Elon Musk Want to Put AI Data Centers in Space?

Artificial intelligence is advancing at an unprecedented pace, and the infrastructure required to support it is growing just as rapidly. Elon Musk has discussed the idea of placing AI data centers in space as a long-term, futuristic solution to some of the major challenges faced by Earth-based computing infrastructure. While still largely theoretical, the concept is driven by practical concerns around energy, cooling, scalability, and safety.

The Growing Energy Demand of AI

AI data centers consume enormous amounts of electricity. Training large AI models requires thousands of powerful processors running continuously for long periods. As AI adoption increases, the demand for energy is placing significant pressure on existing power grids. Space-based data centers could potentially use solar energy more efficiently, as sunlight in space is constant and unobstructed by weather or day-night cycles. This could reduce reliance on Earth’s limited energy resources.

Natural Cooling Advantages in Space

Cooling is one of the biggest operational costs for data centers. On Earth, massive cooling systems are required to prevent servers from overheating. In space, the extreme cold environment offers a unique opportunity for passive heat dissipation. While heat management in space still presents engineering challenges, the absence of an atmosphere removes many of the constraints faced by traditional cooling systems on Earth.

Reducing Environmental Impact on Earth

Large data centers require vast amounts of land, water, and electricity, often affecting local ecosystems. By moving some computing infrastructure off-planet, the environmental footprint on Earth could be reduced. This aligns with Musk’s broader vision of minimizing long-term strain on Earth’s resources while supporting technological growth.

Scalability Without Land Constraints

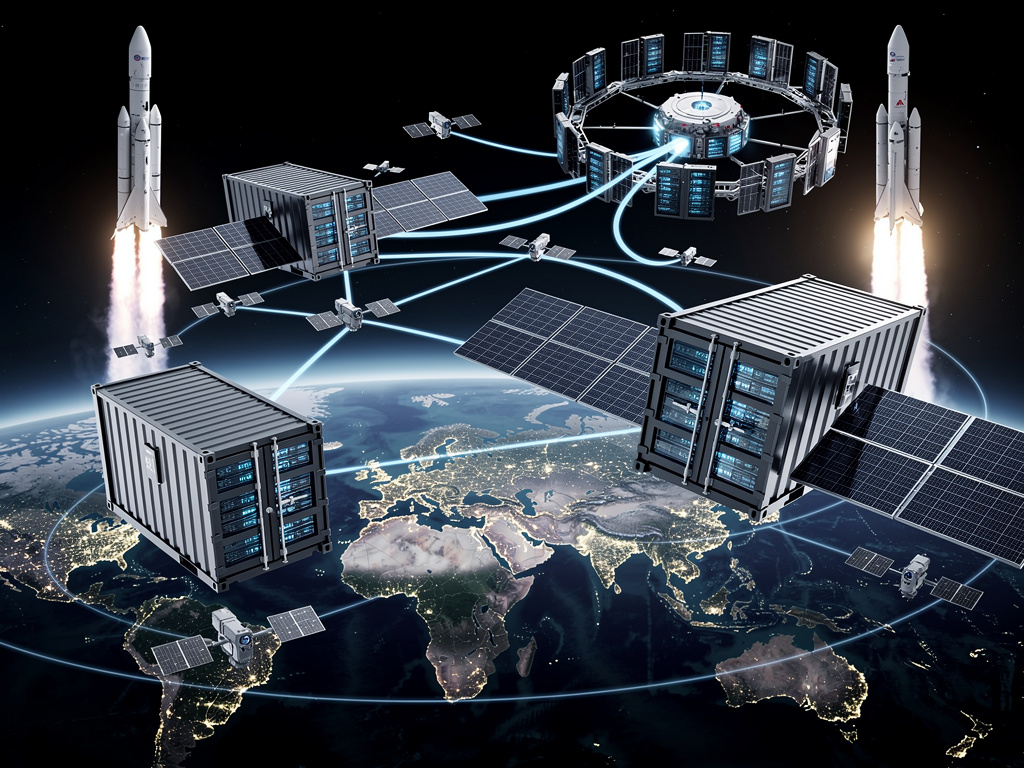

On Earth, building new data centers is limited by land availability, zoning laws, and infrastructure constraints. Space offers virtually unlimited room for expansion. Modular space-based data centers could be scaled up without competing for land or disrupting populated areas, making long-term growth more feasible as AI demands continue to rise.

Safety and Risk Containment

Another motivation behind the idea is risk management. Musk has frequently warned about the potential dangers of advanced artificial intelligence. Housing powerful AI systems off-planet could provide an additional layer of isolation, reducing the risk of unintended consequences affecting Earth directly. While this does not eliminate risk, it could act as a safeguard for experimental or highly advanced AI systems.

Leveraging SpaceX Capabilities

SpaceX’s advancements in reusable rockets significantly reduce the cost of sending payloads into orbit. This makes previously unrealistic ideas, such as space-based computing infrastructure, more technically and economically plausible. Musk’s control over both AI development and space launch technology positions him uniquely to explore such ambitious concepts.

Technical and Economic Challenges

Despite the potential advantages, placing AI data centers in space remains extremely challenging. High launch costs, maintenance difficulties, radiation exposure, and latency in communication are major obstacles. For now, the idea remains more of a long-term vision than an immediate plan.

Conclusion

Elon Musk’s interest in space-based AI data centers reflects his broader approach to solving future problems before they become critical. The concept aims to address energy consumption, environmental impact, scalability, and AI safety in a world increasingly dependent on artificial intelligence. While the technology is not yet ready for practical implementation, the idea highlights how future AI infrastructure may extend beyond Earth as computing demands continue to grow.